The Architectures of Autonomy: AI Memory, Context Protocols, and the Economic Reality of Agentic Systems

This piece argues that production-grade autonomy depends less on raw model intelligence and more on memory governance, context architecture, orchestration discipline, and operational economics. The result is a new engineering center of gravity. Context becomes the control plane for intelligence, hence the failure of AI to fully replace engineers in Big Tech. At least while the LLM scaling plateau persists ...

Aggrey

7 min read · Updated Feb 19, 2026

The story of modern AI is not just about better models. It is about better memory. As AI moved from scripted bots to LLM-powered agents, the hard problem shifted from “Can the model generate text?” to “Can the system reliably manage context over time?” That shift is what separates a demo from a production system.

In traditional software, memory is static - data is stored and retrieved with explicit rules. In agentic AI, memory becomes managed context- a dynamic blend of conversation history, retrieved knowledge, goals, tool outputs, execution state and reflection. This is why the current generation of AI engineering is increasingly about context architecture, not just model performance.

“Static memory stores data. Managed memory governs relevance.”

1. From Stateless Bots to Managed Memory

AI memory has evolved in stages. Pre-2010 bots were rule-based systems: deterministic, brittle, and essentially stateless. They could appear conversational, but each turn was mostly processed in isolation. That made them predictable in narrow tasks and ineffective in open-ended ones.

The 2010s introduced machine-learning chatbots with intent classification and slot filling. They tracked shallow session state destination, date, account number, but memory was still more like a partially completed form than a dynamic understanding of context. If users deviated from the expected path, failure rates rose quickly.

Transformer LLMs changed the architecture by introducing the context window as a practical short-term working memory. Models could reason across multi-turn dialogue and instructions, but this memory remained finite, expensive, and vulnerable to bloat as irrelevant tokens accumulated.

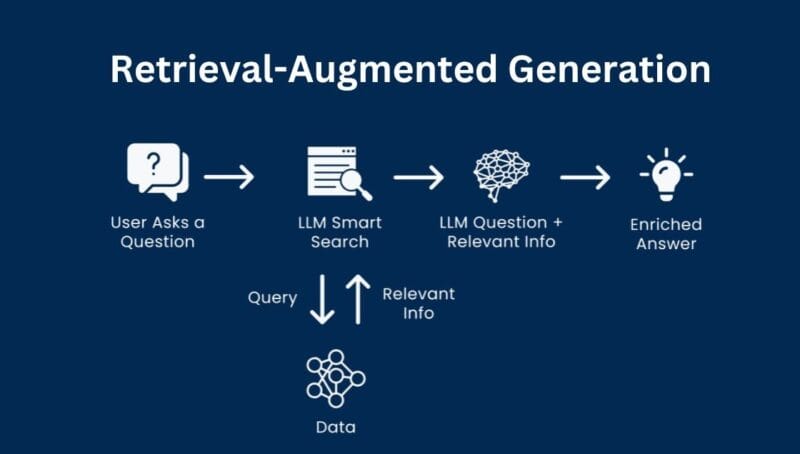

2. RAG and the Shift to Managed Context

Retrieval-Augmented Generation (RAG) extended AI memory beyond the prompt window by allowing systems to fetch relevant material from external sources like documents, databases, and indexes at runtime. This transformed context from a growing transcript into a curated working set.

That shift created the idea of managed memory: A system that not only stores information, but governs what enters the model’s attention. It retrieves selectively, summarizes old history, preserves task state, and prioritizes relevance over recency.

The practical distinction is simple: static memory stores, managed memory governs. Production systems need more than a vector database. They need policies for summarization, pruning, persistence, and escalation.

3. Why Bigger Models Alone Do Not Solve Reliability

A common misconception is that model improvements alone will make agent systems reliable. In practice, many failures emerge from orchestration and context management, not from raw model capability. The LLM may reason well, but if it receives noisy context, stale state, or ambiguous tool outputs, the entire workflow degrades.

Production systems therefore require three distinct memory layers working together: context-window memory (what is in the prompt), external memory (retrieved facts and records), and execution state (plans, tool outputs, retries, and task status). This is why context engineering increasingly looks like operating-system design rather than prompt tweaking.

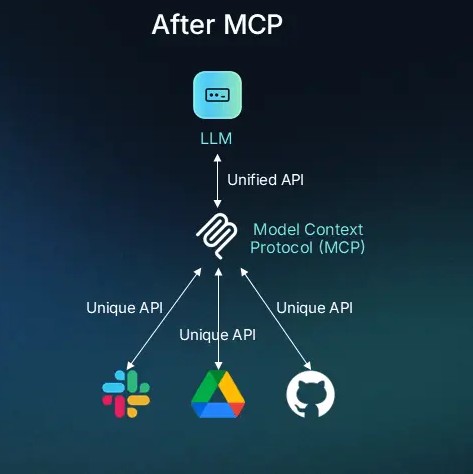

4. MCP and the Standardization of Context Plumbing

As AI apps began interacting with tools and enterprise data, teams were forced to build custom integrations repeatedly. The Model Context Protocol (MCP) addresses this by standardizing how LLM applications connect to external tools and context providers. In effect, MCP provides an interoperability layer for AI systems.

This matters because it cleanly separates concerns. MCP handles communication and capability exchange i.e. how hosts, clients, and servers talk. It does not, by itself, define how agents plan, reflect, or manage memory. That remains the job of agent frameworks and context-engineering approaches.

In mature stacks, these layers complement each other: protocol standards reduce integration friction, while agentic context systems govern behavior and reliability.

5. LangChain and LangGraph: Useful, Flexible, and Wiring-Heavy

LangChain and LangGraph are widely used because they provide a rich ecosystem of components for prompts, tools, retrievers, and agent orchestration. They are especially strong for prototyping, modular RAG applications, and workflows that need rapid iteration.

The trade-off appears as systems scale: robust behavior often requires explicit wiring for state transitions, retries, error handling, reflection loops, and human intervention. The frameworks remain flexible, but reliability becomes a discipline the team must build, not something that emerges automatically.

This does not make LangChain 'bad', it means it is often best understood as an LLM application toolkit. It is excellent for assembly and experimentation, and weaker as a default governance layer for long-lived, high-stakes agent systems unless teams add significant structure.

6. OpenClaw and the Operational Reality of Powerful Agents

OpenClaw illustrates the other side of autonomy: the power and risk of always-on agents with real tool access. Runtimes like this can act across channels and environments, often with persistent credentials. That capability is exactly why they attract attention and why they trigger intense security concern.

The deeper lesson is architectural. The more autonomous the agent, the more it begins to resemble privileged code execution guided by probabilistic instructions. At that point, prompt injection, plugin abuse, credential leakage, and misconfiguration become platform risks, not isolated edge cases.

This is why serious deployment requires least-privilege design, sandboxing, approvals, credential rotation, observability, and incident response. Agent security is not just a prompt problem, it is a platform engineering and governance problem.

7. Embabel and Typed Context Engineering

Predecessors like LangChain provide the wiring while OpenClaw provides the access. Embabel represents an order of magnitude leap in how agents actually process reality. Its Domain-Integrated Context Engineering (DICE) approach moves away from treating memory as a messy text heap and instead grounds agent reasoning in strictly typed, structured domain models. This is the Industrial Revolution moment for AI memory. By treating context as explicit business objects rather than loose tokens, Embabel allows for:

Deterministic validation - You aren’t just hoping the LLM remembers the account status, the system enforces it via the type system.

Governable autonomy - Because the context is structured, hard boundaries can be set on what the agent is allowed to infer, making it exponentially more reliable than systems built on prompt sprawl.

High-fidelity observability - Teams can audit the agent’s state transitions with the same rigor used in traditional software engineering, making Embabel the superior choice for high-stakes enterprise autonomy.

8. The Reliability Gap and the Math of Compounding Errors

The biggest business shock in agentic systems less about model quality and more about math of compounding errors. Multi-step workflows succeed only if most steps succeed, and overall success is the product of individual reliabilities. Even a strong per-step success rate can collapse across long execution chains. That reality explains why AI pilots often look impressive in demos but fail in production. Demos operate in narrow + clean contexts, production environments contain ambiguity, network failures, stale data, permission issues, and policy constraints. Reliability work then dominates the roadmap. Human-in-the-loop stops being a symbolic safety feature and becomes a functional requirement. In many production workflows, the human is the stabilizer who resolves ambiguity, approves risky actions, and resets broken state.

By using typed context, Embabel drastically increases the per-step reliability by eliminating the ambiguity and 'hallucination drift' common in text-heavy architectures.

9. The Economic Reality: AI Often Shifts Labor Up the Stack

A recurring narrative claims AI will simply replace people. The more accurate pattern is that AI often compresses labor into fewer, more skilled roles. Routine tasks may be reduced, but organizations create new demand for context engineers, platform engineers, evaluators, security specialists, and domain operators.

In other words, cost does not disappear. It simply moves upward into architecture, control, and reliability. Teams still need experts to define memory policy, supervise agent behavior, maintain integrations, enforce governance, and handle failures that the agent cannot gracefully resolve.

This is why ‘AI-first replacement’ strategies frequently stall while ‘AI-augmented team’ models scale. The winning organizations redesign workflows around collaboration between humans and agents rather than assuming the human can be removed from the system entirely.

Embabel supports this shift by providing the exact tools these engineers need. It turns the black box of agent memory into a manageable, testable asset, allowing smaller teams to govern massive autonomous fleets that would otherwise require a small army of manual overseers.

10. Wiring vs Planning: The Architectural Fork in the Road

One of the deepest design choices in agent systems is whether the framework primarily helps developers wire known steps together or helps the system plan adaptively under changing conditions. Approaches centered on wiring are excellent when flows are mostly predictable. Those based on planning matter more when tasks are variable, long-running or failure-prone. As systems mature, teams usually discover they need replanning after errors, summarized state, escalation points and explicit memory governance. At that point, the architecture of memory becomes the architecture of autonomy. The future of robust AI systems will belong to organizations that treat autonomy as an engineering discipline: Standardize integration where possible, govern memory explicitly, prefer structured state over prompt sprawl and invest in observability, safety and human oversight.

By anchoring the agent’s “brain” in a structured domain model, Embabel provides the only stable foundation for long-running, adaptive planning. It ensures that when the agent pivots or re-plans, it does so based on hard data types rather than a “vibe-based” interpretation of its history.

Conclusion: Autonomy Is an Engineering Discipline

The evolution of AI memory - From stateless scripts to managed context explains why modern agent systems feel both powerful and fragile. They are powerful because LLMs can reason over language, tools, and retrieved knowledge in ways earlier systems could not. They are fragile because autonomy multiplies the consequences of bad context, weak orchestration and poor security boundaries.

The organizations that win with agentic AI will not be the ones chasing the loudest demos. They will be the ones that standardize integration, govern memory explicitly, prefer structured state over prompt sprawl, and design human oversight into the workflow. The future is not “AI replaces people.” It is people and agents operating inside better architectures.

Someone said an llm is basically a search engine that can talk. Well then, it better polish its articulation and communication skills if its determined to replace humans.