The Information Arc: From Talking Drums to the Neural Frontier

Information is not just the stuff in books or the data on your hard drive. As James Gleick argues in his seminal work, The Information, it’s the blood, the fuel, and the very fabric of the universe. To understand where we are going with AI and Brain-Machine Interfaces (BMI), we must first understand how we learned to separate the “message” from the “matter.”

This piece is inspired by The Information by James Gleick, which traces the evolution of information from early communication to modern information theory. Through concepts like entropy, noise, and compression, the book reveals how information underpins science and technology. It’s a highly recommended read if you want a deeper intuition for data, signals, and meaning. You can find it on Amazon here.

Aggrey

Information Theory · Shannon Entropy · Cybernetics · Codes & Compression · Robotics · History of Computing · Philosophy of Information

10 min read · June 20, 2026

Redundancy beats noise

The Acoustic Bit: Talking Drums and the Birth of Redundancy

Long before the telegraph, the first “high-speed” long-distance communication network existed in the rainforests of Africa. The “talking drums” were not merely signaling devices; they were a complex, tonal language system.

The genius of the drum was how it solved the problem of ambiguity. Because drumbeats lack the vowels and consonants of speech, a single beat could mean a dozen things. The solution? Redundancy. To say “moon,” a drummer wouldn’t just strike once; they would send a poetic phrase like “the moon that looks down on the earth.” This added “bits” to the message to ensure the signal survived the “noise” of the jungle. This was the first practical application of what Claude Shannon would later mathematically define as “error-correcting code.” Information complexity began here, by learning that to be understood, you must often say more than what is strictly necessary.

Standardization scales meaning

The Persistence of the Word: The Dictionary as the First Compiler

If drums were the first “streaming” service, writing was the first “storage” medium. But writing was chaotic until the creation of the dictionary. In Gleick’s view, the dictionary, specifically the “wordbooks” of the 17th century like Robert Cawdrey’s, acted as the first word compiler.

Before dictionaries, spelling was a suggestion and meaning was fluid. The dictionary “compiled” the fuzzy logic of human speech into a standardized, searchable API. It brought a “logic and order” to language that allowed for the scaling of knowledge. Once you have a dictionary, you have a fixed set of symbols. You have a “lookup table.” This standardization was the essential precursor to any form of automated thought; you cannot program a machine to think if the words it uses change their meaning every fifty miles.

Symbols become executable

The Analytical Engine: Mapping Thought into "Wheel-Work"

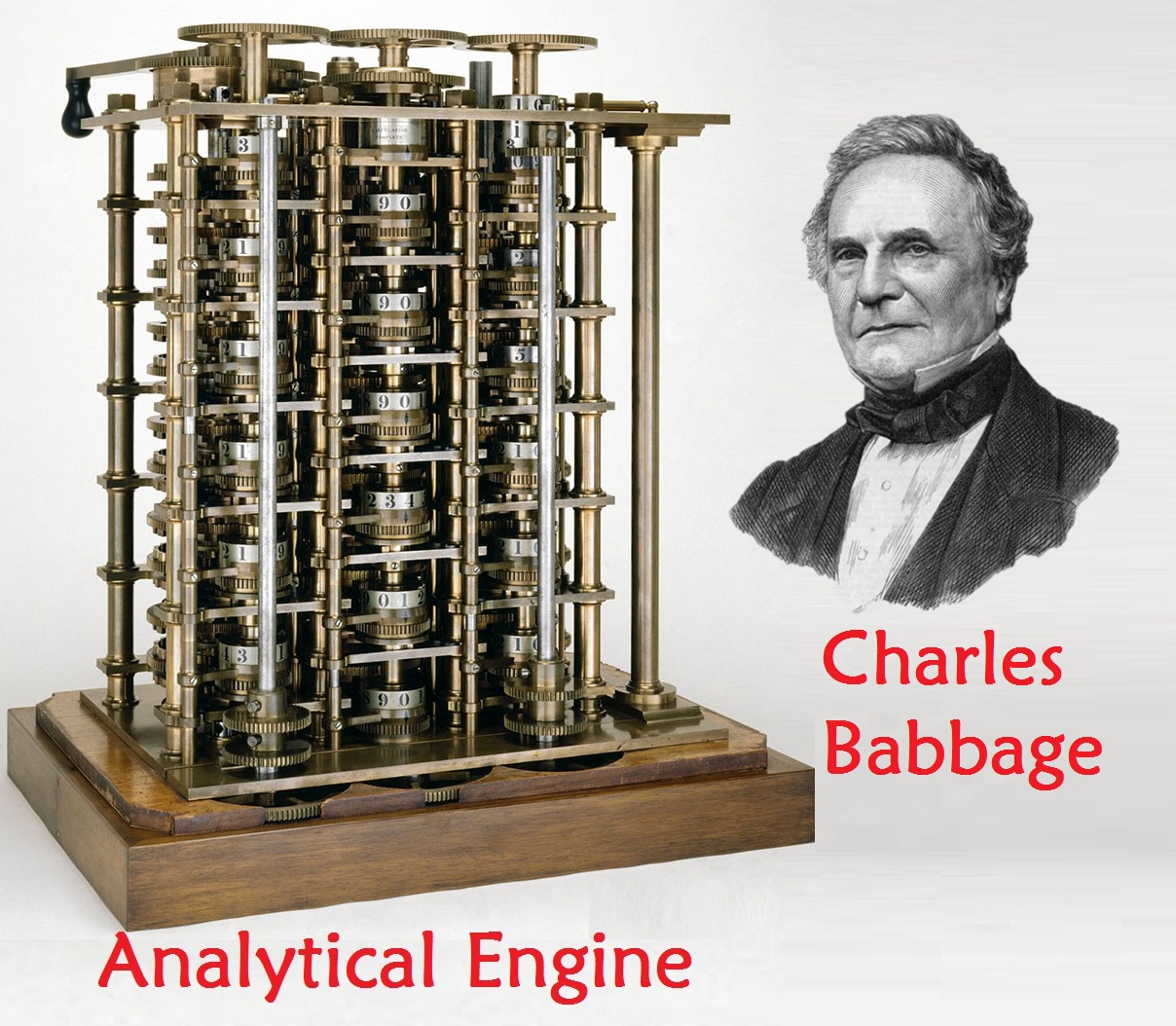

In the 19th century, Charles Babbage and Ada Lovelace made the ultimate leap: they realized that logic could be physical. Babbage’s Analytical Engine was the first design for a general-purpose computer. It featured what we now call a theoretical ALU. He called it “The Mill” and a separate “Store” (Memory).

Lovelace’s insight was even more profound than Babbage's. She realized that the machine didn’t just have to process numbers; It could process any symbolic information. If you could map music, logic, or language onto the “wheel-work” of the gears, the machine could “think” about them. This was the transition from “calculating” to “computing.” Information was no longer just a record of the past; it was a process that could be executed.

Bits break free of matter

A Nervous System for the Earth: The Telegraphic Medium

The telegraph was the “Great Divide.” Before it, information moved at the speed of a horse or a ship. It was tied to continuous matter. The telegraph changed the medium to discrete electrical pulses.

This was the first time “bits” (dots and dashes) moved independently of physical objects. It created a “global nervous system.” The telegraph forced a compression of language (telegraphese) and a new awareness of time. Because information could move instantly, the “present moment” expanded. For the first time, a merchant in London knew the price of cotton in New York now, not three weeks from now. The world became synchronized by the pulse of the wire.

True/False becomes hardware

New Wires, New Logic: The Marriage of Boole and Electricity

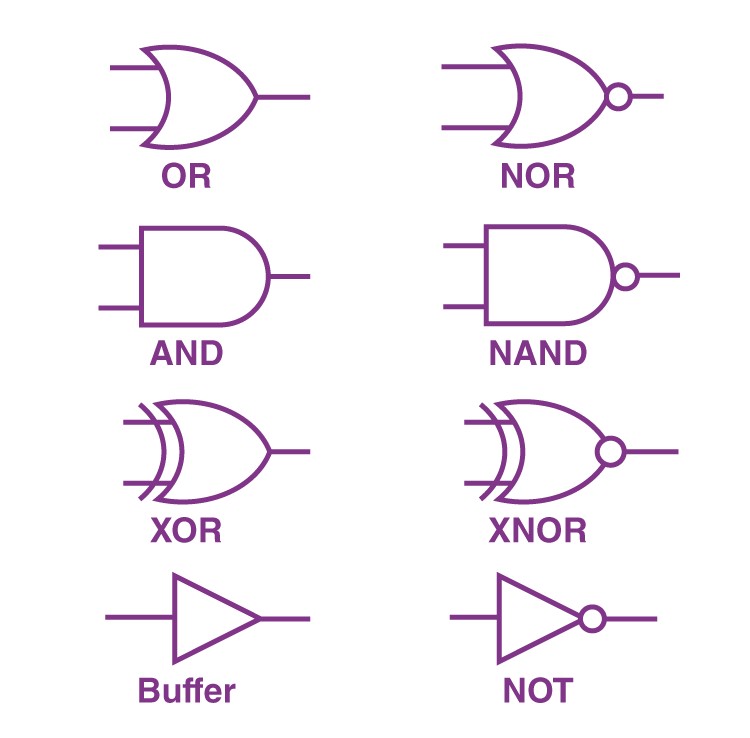

While the telegraph wires were spreading, George Boole was reinventing logic. He realized that the ancient propositional logic of Aristotle could be treated as a form of algebra. By reducing all logical statements to “True” or “False” (1 or 0), he created a mathematical language for the “Laws of Thought.”

However, Boole’s logic sat on a shelf for decades as a philosophical curiosity. It wasn’t until the 20th century that Claude Shannon realized Boole’s “0 and 1” logic was the perfect language for the “On/Off” states of electrical switches. This was the Great Synthesis: electricity could not only power a lightbulb, it could also carry a logical argument.

Uncertainty becomes measurable

The Information Theory Revolution: Defining the "Bit"

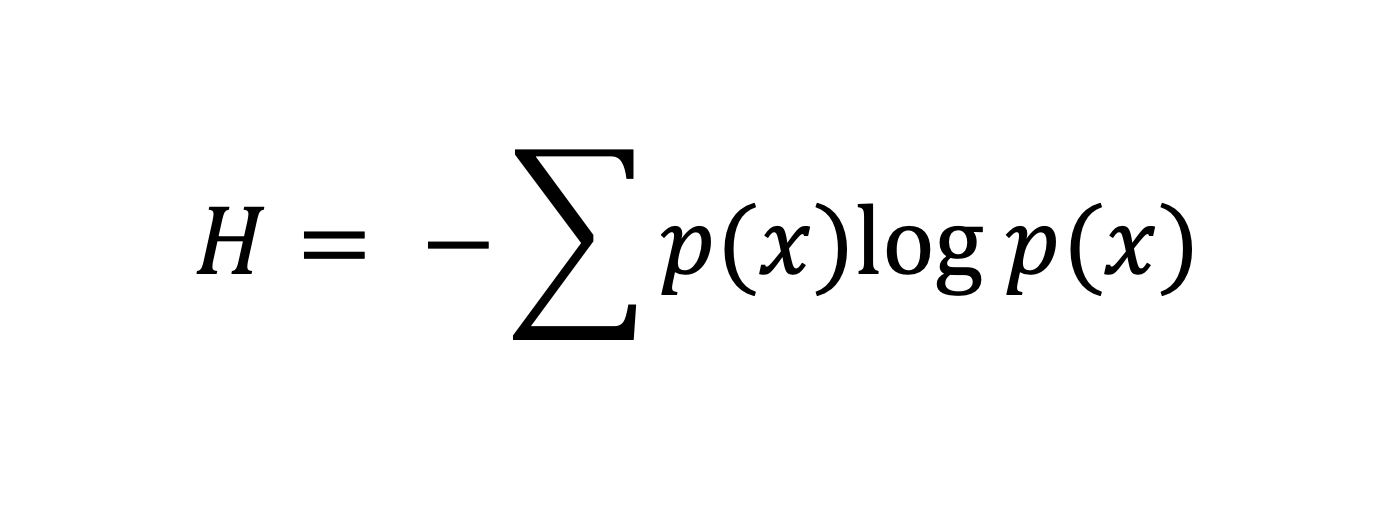

In 1948, Claude Shannon published A Mathematical Theory of Communication, and the world changed forever. Shannon gave us the bit as the fundamental unit of information, but more importantly, he decoupled information from meaning.

In Shannon’s world, a bit is simply a choice that resolves uncertainty. Whether that bit represents a pixel in a photo or a letter in a DNA sequence is irrelevant to the theory. This allowed us to measure information complexity across domains. He also introduced entropy to communication: the idea that the more random or surprising a message is, the more information it contains.

This was the moment information became a physical quantity, as real as energy or mass.

Feedback loops create order

The Cybernetics Movement: Information as Control

As the 1950s dawned, the cybernetics movement, led by Norbert Wiener, took Shannon’s ideas and applied them to time and movement.Cybernetics is the study of “control and communication in the animal and the machine.”

The core idea was the feedback loop. Information isn’t just a one-way stream; it is a circular process. Sensors gather information, the system processes it, and an effector acts on the world, which creates new information for the sensors. This perspective turned the “mind” into an information-processing system. It suggested that,

a thermostat and a human brain are doing the same thing: using information to maintain order against the chaos of the environment.

Information has a cost

The Physics of Information: Thermodynamics and Entropy

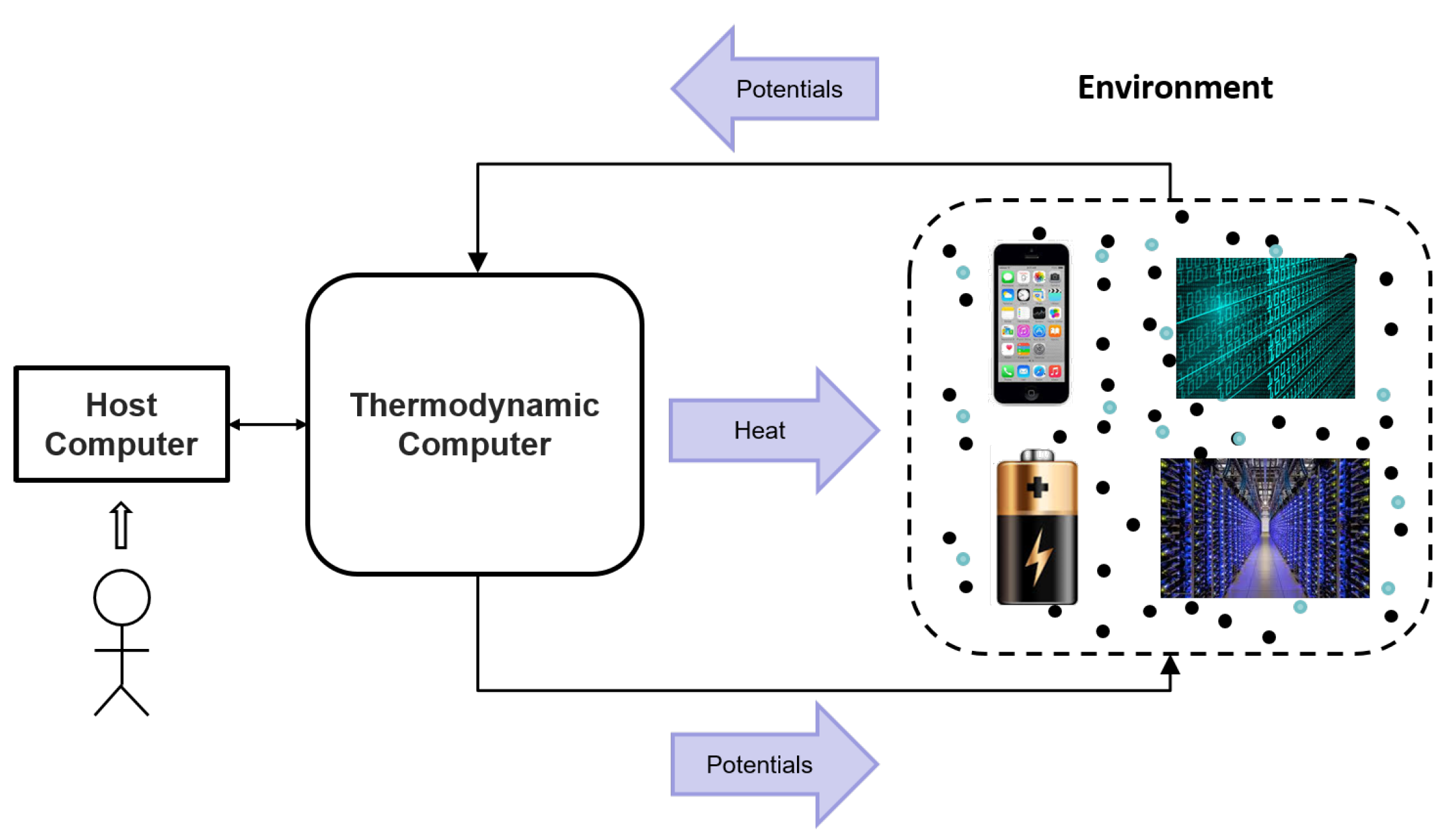

Gleick’s history takes an unexpected, brilliant turn into physics. We learned that information is not “free.” Rolf Landauer and Charles Bennett showed that erasing a bit of information releases heat. This linked information theory directly to the second law of thermodynamics.

This is the Maxwell’s Demon problem: to sort “hot” molecules from “cold” ones, a demon needs information. But to get that information and store it, the demon must eventually erase its memory, which creates more heat than it saves. Information is a physical thing. It has weight. It has a cost.

The complexity of our digital age is literally warming the planet not just through the power used by servers, but as a fundamental law of the universe.

Limits define intelligence

Computation & The Infinite: Turing, Chaitin, and Memes

Alan Turing gave us the Turing Machine. The proof that a simple machine reading symbols on a tape could compute anything that is computable. But he also showed there are limits. The Busy Beaver problem hints that there are some things we can never know or calculate; some information is undecidable.

Gregory Chaitin took this further with algorithmic information theory, defining the complexity of a string as the length of the shortest program that can produce it. At the same time, Richard Dawkins introduced the meme. Information was now seen as a replicator. Just as DNA replicates in cells, memes (ideas, songs, catchphrases) replicate in the infosphere of human minds. Information became alive, driven by evolutionary pressure to survive and spread.

From storage to translation

The 2026 Frontier: Managing the Flood

We have moved past the history of the bit and into the era of integrated intelligence. In 2026, our view of the world is no longer about storing information, but about seamless translation between the digital and the physical.

The LLM as the Universal Text Compiler, at least for now. If the dictionary was the first "word compiler," the Large Language Model (LLM) is the final one. And if we solemly believe this to be true, we find ourselves in a position strikingly similar to a Chevalier of the Maison du Roi in 1675, tasked with the protection of Marie Thérèse of France, the daughter of the Sun King.

As this knight stands sentinel, he watches the King’s advisors; the early "information architects", poring over the recently perfected Dictionnaire de l'Académie française. To him, civilization has reached its absolute terminal peak. He watches them use these standardized "compilers" to do Statecraft: drafting the Code Louis to unify French law into a single searchable logic; and Clandestine Warfare: utilizing the "Great Cipher" of the Rossignols to encrypt letters that decide the fate of the Dutch War.

To the Chevalier, the dictionary is not just a book; it is a divine machine of order that has finally tamed the "noise" of the world. He believes that by pinning every word to a single definition, his King has achieved the ultimate control over reality.

Today, we look at the Large Language Model with that same breathless wonder, having moved from programming computers with rigid, brittle code to prompting them with the raw redundancy of human language. By ingesting the totality of written history, LLMs have transformed our collective "noise" into a multidimensional map of thought, acting as the final, supreme wordbooks of the digital age. We feel, as the Chevalier did, that we have finally mapped the boundaries of the "infosphere."

We stand on the precipice of a reality where intent becomes execution without the friction of language, proving the "word" was always a bottleneck. Just as the telegraph's discrete pulses once obliterated the Chevalier’s world of ink, our era of prompting is merely a transient waypoint before the medium of language disappears. The 2026 frontier of neural integration and autonomous robotics suggests the future is not about mastering the flood, but transcending the interface entirely. This informational deluge is not a disaster to be survived, but the necessary substrate for an unimaginably more complex evolution.

Robots and Drones: Information with Legs. The cybernetics of the 1950s has matured into autonomous infrastructure.

Sure, Ada Lovelace was more interested in Babbage's Analytical Machine than the Silver Lady, but Bool's logic also sat on the same shelf till a better suited space-timewise person decided to pursue the unknown, this time with robotics

Self-driving cars and drone swarms are essentially information endowed with spatial intelligence. They do not just move through space; they calculate it, turning the chaotic physical world into a high-fidelity, real-time bitmap. By processing millions of variables through the "Laws of Thought" Boole pioneered, these machines navigate the entropy of a city street with the same discrete precision that once lived only in the abstract pulses of a telegraph wire. In 2026, the distinction between a data packet and a moving vehicle has blurred; the robot is simply a thought that has learned how to walk.

Brain-Machine Interfaces (BMI): The compliment of the Medium. For thousands of years, the bottleneck of information was the human body. With modern BMIs (like Neuralink and Synchron), we are supplimenting the medium. Moving from bits on a wire to spikes in a neuron.

In 2026, we are shifting from the external transmission of bits on a wire to the internal synchronization of spikes in a neuron, finally understanding the biological bottleneck. The interface is no longer a tool we hold, but a bridge merging symbolic processing with the global infosphere, treating paralysis and disability as signal-processing refactoring rather than biological failures. By decoupling the mental "message" from the physical "matter," we are moving toward a future where intent becomes execution without the friction of language.

We have spent centuries trying to solve the problem of how to send a message through the noise of the physical world. Now that the message has become the world itself, perhaps the most revolutionary act of information theory is knowing when to switch the receiver off. You can start by touching some grass as you reflect on what you've read, so to speak.

We are no longer just observers of information history, we are becoming the information itself.

No, this blog doesn't have ad analytics lol