The Java Renaissance: Why the “Rewrite in Rust” Era Is Quietly Ending

Aggrey Lelei

Distributed Systems · JVM · Infrastructure · Cloud Computing

6 min read · Jan 5, 2026

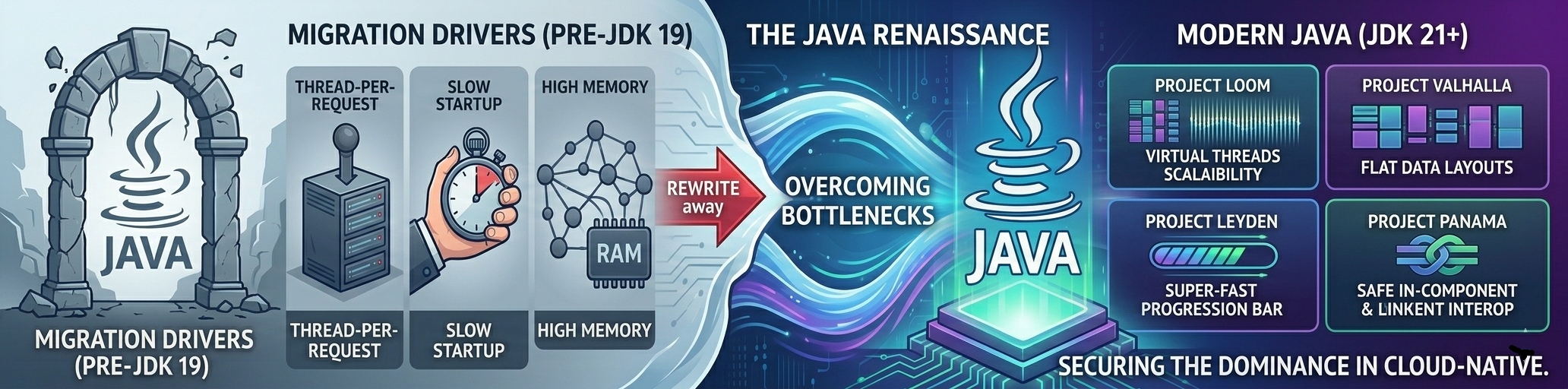

Obviously Java had a scaling problem and people felt it in their pager rotations. But the platform that spawned the internet-scale era didn't stay still. The pieces that made teams abandon the JVM are now being addressed at the platform level.

Java has been the elephant in the room for a while

For much of the last decade, rewriting Java systems became a rite of passage for serious infrastructure teams. If you wanted to scale cheaply, survive unpredictable traffic, or keep cloud bills under control, the accepted wisdom was blunt: Java had hit its limits. This belief wasn’t ideological. Teams watched thread counts climb into the tens of thousands, garbage collection pauses bleed into tail latencies, and memory limits get violated in containers that should have been fine. Systems stayed correct but became operationally fragile.

Java failed expensively. In the pre-cloud era, many of these issues were survivable. Services ran for months. Heaps grew large and stayed warm. Startup cost was amortized across long-lived processes. A few extra gigabytes of RAM could hide architectural inefficiencies.

Cloud-native architectures removed that buffer.

- Autoscaling punished slow startup.

- Multitenancy exposed memory overhead.

- High fan-out microservices amplified synchronization costs.

So teams rewrote:

- DoorDash moved critical services to Go.

- Discord rebuilt infrastructure in Rust.

- Cloudflare invested heavily outside the JVM.

Concurrency was the first fault line

Classic Java servers mapped each incoming request to an operating system thread. For years, this model scaled “well enough” by adding more machines and increasing thread pool sizes. Eventually, that strategy hit a wall and stopped working.

Operating system threads are heavyweight. Each thread reserves stack memory whether it uses it or not, context switches are expensive, and kernel schedulers are designed for thousands of threads not hundreds of thousands. As concurrency increases, especially in I/O-bound workloads, overhead starts to dominate useful work.

To cope, Java frameworks began contorting themselves. Async callbacks proliferated. Reactive streams became fashionable. Thread pools were tuned by trial, error, and superstition. Debugging grew painful as stack traces stopped resembling real control flow.

Go’s goroutines reset expectations by introducing a different implementation. Running millions of concurrent tasks became normal. Blocking I/O was no longer taboo. In comparison, the JVM’s thread-per-request model suddenly looked obsolete.

Project Loom doesn’t try to copy goroutines. Instead, it removes the constraint that made them necessary. Virtual threads are cheap and abundant, and they’re scheduled by the JVM rather than the operating system. When a virtual thread blocks on I/O, it is transparently unmounted from its carrier thread, freeing the OS thread to run something else. No callbacks. No reactive rewrites. No ecosystem fracture.

The deeper victory is continuity. Existing blocking APIs, JDBC drivers, and synchronous libraries scale without modification. Debugging stays familiar. Stack traces remain meaningful. Observability tooling continues to work. This is better and scalable concurrency without abandoning Java’s mental model.

Native code exposed the JVM’s sharpest edges

Normally JNI worked until it didn’t. At small scale, native integrations were tolerable but became liabilities at large scale. JNI exposed raw pointers, relied on manual memory discipline and trusted developers not to break invariants the JVM itself enforces everywhere else.

Native code crashed instead of degrading degrade gracefully. For teams operating latency-sensitive systems or interacting with hardware, this fragility was unacceptable. Memory safety ended exactly where performance began.

Rust’s rise here wasn’t accidental. Its guarantees around memory layout, lifetimes, and ownership gave teams confidence that native code wouldn’t take the process down with it.

Project Panama fundamentally changes this relationship. Instead of treating native code as an opaque danger zone, the JVM now models memory segments, lifetimes, and calling conventions explicitly. Java code can interact with native libraries through structured APIs that make ownership and scope clear.

The result is native interoperability that is fast, predictable, and survivable. Java no longer has to choose between performance and safety. Native code becomes an extension of the JVM’s model rather than a breach in it.

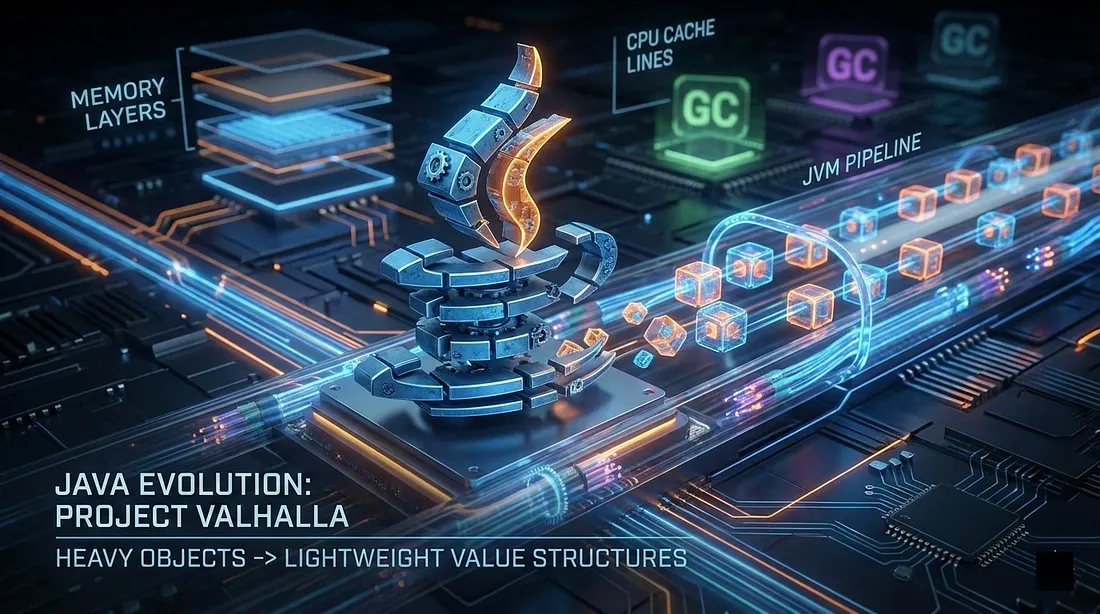

Memory density was the problem no profiler screamed about

Many of Java’s most expensive failures were memory-bound. Object-oriented design came with hidden costs such as headers, pointers, indirections, and fragmented layouts. Millions of small objects scattered across the heap translated into cache misses, bloated memory footprints and garbage collectors spending more time moving metadata than data.

This manifests subtly but brutally at scale. Memory bandwidth saturates. GC cycles lengthen. Tail latencies climb even when CPU usage looks healthy.

Discord’s rewrite was driven by data density.

Flattened structures and contiguous memory unlocked dramatic improvements in cache locality and memory efficiency.

Project Valhalla brings that capability into the JVM itself. Value classes remove object headers and pointer indirection, allowing data to be stored contiguously while preserving type safety and expressiveness. Code remains readable. Data becomes compact.

Project Lilliput attacks the remaining overhead by shrinking object headers where objects are still required. Together, these changes strike at one of the JVM’s oldest weaknesses. In high-scale systems, better memory density not only improves performance, it directly reduces infrastructure cost and operational risk.

Cold starts collided with cloud economics

Java assumed longevity. The cloud assumes ephemerality. Containers spin up and down constantly while serverless platforms penalize idle memory. Autoscalers expect instances to become useful almost immediately. In this world, multi-second startup times are minor annoyances and more architectural failures.

Native images helped but at a cost. Reflection broke, dynamic loading disappeared, observability suffered. Many teams found themselves trading runtime flexibility for startup speed and not always winning.

Project CRaC takes a different approach. Instead of reinitializing the JVM repeatedly, CRaC snapshots a fully initialized, fully warmed process and restores it almost instantly. Initialization cost is paid once and reused.

Project Leyden pushes the idea further by shifting work to build time. Runtime initialization paths shrink. The JVM starts closer to a steady state rather than a cold one. Together, these projects realign Java with cloud economics. Startup time stops being a deal-breaker and becomes an engineering choice.

Ergonomics mattered more than we admitted

Hardware has shown that performance alone doesn’t win ecosystems. Developers choose tools that reduce cognitive load. It reached a time we realised java’s verbosity wasn’t just aesthetic. The time needed to code down an intent was obscured by boilerplate which slowed reasoning. This friction accumulated as systems grew larger and teams moved faster. In that environment, Kotlin’s rise felt less like rebellion and more like relief.

Project Amber modernized Java without fracturing it.Records removed boilerplate while preserving clarity. Pattern matching reduced unsafe casts. Sealed types made invariants explicit. Switch expressions became expressive rather than error-prone.

Modern Java is leaner, clearer, and closer to how engineers reason today. The language stopped defending its past and started serving its users.

Closing thoughts

The Rust rewrites were a market signal. They showed that when guarantees around safety, concurrency, memory and startup matter enough, teams will rebuild from scratch to get them. That signal triggered deep architectural change inside the JVM. Not incremental tuning, but structural evolution shaped by modern workload demands. What emerged is a platform transformed by pressure rather than displaced by it.

The rewrite to Rust era wasn’t wrong, just early

Hence, it forced Java to evolve. As we move deeper into the last half of the 2020s, the question is no longer “Should we rewrite?”,

It’s “Would we still rewrite, knowing what Java looks like now?”

Increasingly, the answer is no.